When Jeff Bezos was 11 years old, he discovered an odd looking machine in the hallway of his elementary school. It was a primitive mainframe computer — “with a teletype that was connected by an old acoustic modem. You literally dialled a regular phone and picked up the handset and put it in this little cradle.”

None of the teachers knew how to operate the machine, but Bezos worked through a stack of manuals and staying for hours after school, he made a discovery.

“The mainframe programmers in some central location somewhere had programmed a game based on Star Trek.”

Bezos became obsessed: spending hours playing out battles on the machine in the hallway. When time came to act out Star Trek in the playground, some kids would scramble to play the role of Spock, or Captain Kirk.

“I would take the computer. The computer was fun to play because people would ask you questions.”

A Star Trek computer in your home

In November 2014, Amazon revealed it’s own fledgling version of the Star Trek computer.

Alexa is an always-on artificial intelligence, actively waiting for the next voice command to help.

She can be commanded to play music tracks, adjust the sound volume or dim the lights, call an Uber or add items to a grocery list.

There are no buttons, screens, keyboards – just ‘voice.’

And the more we speak to her, the more personal it gets. Alexa is constantly learning about our moods, our habits, our interests.

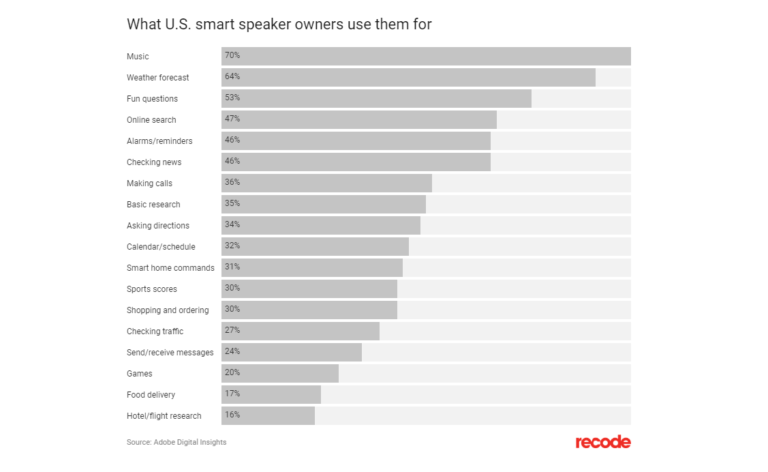

In a recent survey, the top things that UK users want from their smart speaker included…

“Help telling jokes”.

“Help distracting the kids”.

“Help to be funnier and more attractive”.

In fact, smart speakers are fast becoming the most popular device since the iphone. Juniper Research predicts that 55% of US households will have a smart speaker by 2022.

This will be a subtle invasion. Amazon lab researchers working on Alexa with Bezos report that again and again he vetoes the inclusion of features because they stop Alexa from being simple and hassle free — invisible, ever present and helpful.

Because Bezos doesn’t want won’t stop at the home.

Amazon (and Google, Microsoft and a whole range of startups) are investing billions in voice tech – exploring new systems that can analyse your voice to determine everything from your emotional state to your mental health to your risk of a heart attack.

A Voice in Your Car

Take Amazon’s recent rollout of Echo Auto.

From Wired…

Amazon introduced the road-going, Alexa-equipped device in September of last year, and started shipping to some customers in January.

About the size and shape of a cassette, the Echo Auto sits on your dashboard and brings 70,000 Alexa skills into your car. Its eight built-in microphones let you make phone calls, set reminders, compile shopping lists, find nearby restaurants and coffee shops.

Amazon is working with some automakers to build Alexa into new cars, but the $50 Auto works with tens of millions of older vehicles already on the road: All you need is a power source (either a USB port or cigarette lighter) and a way to tap into the car’s speakers (Bluetooth or an aux cable).

So far Amazon has sold about 1 million of these devices.

And it makes sense to have a hands free device that won’t distract you from the road.

You can speak to it, make phone calls, keep yourself entertained with jokes and local knowledge…all while keeping your hands on the wheel and eyes ahead.

And as you put in the miles, slowly, this AI will get to know you.

It will measure the tone and tempo of your voice.

It will compare your speech patterns to its database of human moods and emotions.

The science of this kind of vocal analysis has been developing for decades, but cheaper computing power and new AI tools have made it possible to build a system that you will be very happy to talk to.

For example, AI startup Affectiva has recently teamed up with Nuance Communications (NASDAQ: NUAN) to add emotional intelligence to Nuance’s conversational car assistant.

The assistant is called Dragon Drive.

It uses cameras to detect facial expression such as a smile or a yawn, and a microphone to pick up on vocal expressions.

The idea is to recognise when you are drowsy, or in need of stimulation when you drive.

It captures visceral, subconscious reactions – your facial expression or the way your eyes dilate or close for a second or two.

And it gives you a nudge when you need it.

Or perhaps just lighten the mood for a long road journey.

Helping with Road Rage

Some of these emotions will be easy for the AI to read. This AI is already well used to recognising swear words, shouting and blind rage.

But they will eventually learn to pick up on more subtle disturbances.

For example, the Mayo Clinic conducted a two-year study that ended in February 2017 to see if voice analysis was capable of detecting coronary-artery disease.

In collaboration with voice-AI company Beyond Verbal, the study used machine learning to identify what it thought were the specific voice biomarkers that indicated coronary artery disease. The clinic then tested groups of people who were scheduled to get angiograms, and matched the patterns.

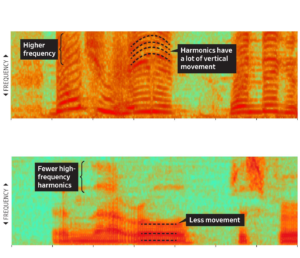

With spectrograms (pictured), we can train AI to analyse the character of a speakers voice – with harmonics (dark parallel lines) reflecting changes in a speaker’s pitch and intonation.

This kind of voice analysis has already been using in the financial industry to stamp out fraud.

AI startup Pindrop’s helps prevent voice fraud by analysing nearly 1,400 acoustic attributes in a voice – such as tone, speed, pitch, harmonics, and other patterns.

The system can help organisations figure out if you are really you when you call in on the phone, which remains up to 78% percent of how we choose to interact with our merchants, government agencies and banks.

In the next few years, we will witness a storm of AI and compounding collections of new data as we increasingly interact with screenless devices by voice and eventually, with head gestures.

We will be nodding, shaking our heads, speaking and listening to AI in our homes and cars, perhaps even in public.

According to comScore by 2020:

- 200 billion searches a year done by voice, yielding a $50 billion market in voice search, with compounding risks of fraud and identity theft.

- We will speak rather than type more than half of their Google search queries.

- The market for ads delivered in response to voice queries will be $12 billion.

- Aside from the market for voice search, a ‘wearables’ market of $16 billion with bean sized earbuds that translate, filter out ‘noise’ and let us control our devices by voice.

Of course there are very real limits to this technology.

The first issue is privacy.

Will these voice assistants always be recording?

How can we be sure that those audio files are private?

And what happens if your voice is cloned and then used to send blackmail messages or requests for money to your contact list?

The technology for cloning a voice is already available.

In fact, as Baidu has recently demonstrated, it takes just 3.7 seconds of audio to clone a voice.

The AI on the horizon looks like Alexa – affordable, reliable, industrial-grade digital smartness that follows you everywhere.

At what point will that become oppressive?

My instinct is that we will soon give machine learning algorithms access to our most intimate thoughts and impulses, allowing devices to relay useful data to networks where they will be parsed and processed with millisecond delays, presenting us with a branching tree of decisions about almost every routine in the day.

And this will create huge investment opportunities.

Right now the voice tech market is being dominated by the likes of Amazon, Google and Microsoft.

But it’s also well worth looking at companies such as Nuance Communications and Affectiva, both of which are well placed to profit as voice technology colonises cars, homes and advertising.